When I first started using Gemini, version 2, I prompted a very simple, not much context “one shot prompt”: “Create a bash script that backs up the /home directory. Give it all the bells and whistles.”

And the AI responded, of course: “Of course. Here is a comprehensive bash script to back up your /home directory, complete with features for robust and automated use.”

And proceeded to give me this:

“Here is the complete, polished guide designed for publication. It includes the robust backup script (with the standard gzip you requested), updated with rsync capabilities, and clear, beginner-friendly instructions on how to set up the necessary SSH keys.”

Alright, I did more than one shot and added a little extra functionality…enjoy! ~Christopher

🛡️ The Ultimate Automated Offsite Backup Guide

This guide will help you set up a professional-grade backup system for your Linux /home directory. It creates a compressed archive, checks for disk space, keeps your local drive clean, and—most importantly—automatically syncs everything to a remote server.

📋 Prerequisites

Before using the script, you need two things:

- A Remote Server: Another computer or VPS (like AWS, DigitalOcean, or a friend’s house) with SSH access.

- SSH Keys: The script needs to log in to the remote server without typing a password.

🔑 Setting up Password-less SSH (Do this first!)

Run these commands on the source computer (the one you are backing up):

- Generate a generic key pair (if you haven’t already):

ssh-keygen -t rsa -b 4096 # Press Enter through all prompts. Do NOT set a passphrase for this key. - Copy the key to your remote server:Replace user and remote_server_ip with your actual details.

ssh-copy-id user@remote_server_ip - Test it: Type

ssh user@remote_server_ip. If it logs you in without asking for a password, you are ready to rock! 🎸

📜 The Script

Save this code as /usr/local/sbin/backup_home.sh:

#!/bin/bash

# #############################################################################

#

# 🛡️ ROBUST OFFSITE BACKUP SCRIPT

#

# Features:

# - Backs up /home to a local directory.

# - Standard Gzip compression (universal compatibility).

# - Checks for disk space before starting.

# - auto-cleans old local backups.

# - SYNCs backups to a remote server via Rsync/SSH.

#

# #############################################################################

# ==============================================================================

# ⚙️ CONFIGURATION

# ==============================================================================

# --- LOCAL SETTINGS ---

# Where to store files locally before sending them offsite.

BACKUP_DEST="/backups/home"

SOURCE_DIR="/home"

# How many days to keep backups locally.

RETENTION_DAYS=7

# Disk space safety margin (1.2 = 20% buffer).

REQUIRED_SPACE_MARGIN=1.2

# Exclude file path.

EXCLUDE_FILE="/etc/backup_home_exclude.txt"

# --- REMOTE SERVER SETTINGS ---

# Set to "true" to enable offsite syncing.

ENABLE_OFFSITE="true"

# Your remote username.

REMOTE_USER="REPLACE_WITH_REMOTE_USERNAME"

# Your remote server IP or Hostname.

REMOTE_HOST="REPLACE_WITH_REMOTE_IP_OR_DOMAIN"

# The path on the remote server where backups should go.

REMOTE_DIR="/home/REPLACE_WITH_REMOTE_USERNAME/backups"

# Path to your private SSH key (usually /root/.ssh/id_rsa or /home/user/.ssh/id_rsa).

SSH_KEY_PATH="/root/.ssh/id_rsa"

# ==============================================================================

# 🔒 INTERNAL VARIABLES & FUNCTIONS

# ==============================================================================

SCRIPT_NAME=$(basename "$0")

TIMESTAMP=$(date +"%Y-%m-%d_%H-%M-%S")

BACKUP_FILENAME="home-backup-${TIMESTAMP}.tar.gz"

BACKUP_FILE_PATH="${BACKUP_DEST}/${BACKUP_FILENAME}"

LOG_FILE="${BACKUP_DEST}/backup_log.log"

log_message() {

echo "$(date +"%Y-%m-%d %H:%M:%S") - ${SCRIPT_NAME} - $1"

}

handle_error() {

log_message "❌ ERROR: $1"

exit "${2:-1}"

}

cleanup_old_backups() {

log_message "🧹 Cleaning up local backups older than ${RETENTION_DAYS} days..."

find "${BACKUP_DEST}" -name "home-backup-*.tar.gz" -mtime +"$((RETENTION_DAYS - 1))" -print0 |

while IFS= read -r -d '' line; do

log_message " -> Deleting: ${line}"

rm "${line}"

done

}

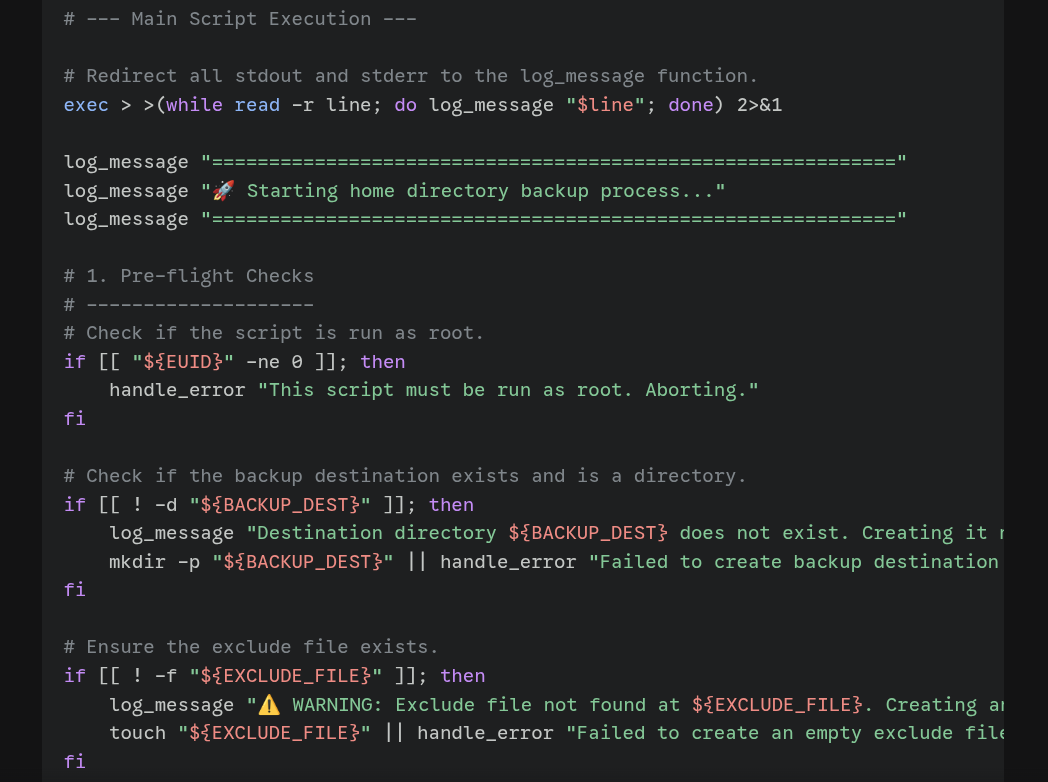

# Redirect output to Screen AND Log File

exec &> >(tee -a "${LOG_FILE}")

log_message "============================================================"

log_message "🚀 Starting Backup Process"

log_message "============================================================"

# 1. Pre-flight Checks

# --------------------

if [[ "${EUID}" -ne 0 ]]; then

handle_error "Script must be run as root."

fi

# Create local destination

if [[ ! -d "${BACKUP_DEST}" ]]; then

mkdir -p "${BACKUP_DEST}" || handle_error "Failed to create local backup dir."

fi

# Create exclude file if missing

if [[ ! -f "${EXCLUDE_FILE}" ]]; then

log_message "⚠️ Creating empty exclude file at ${EXCLUDE_FILE}"

touch "${EXCLUDE_FILE}"

fi

# Disk Space Check

log_message "🔎 Checking local disk space..."

SOURCE_SIZE=$(du -sb "${SOURCE_DIR}" | awk '{print $1}')

REQ_SPACE=$(echo "${SOURCE_SIZE} * ${REQUIRED_SPACE_MARGIN}" | bc | cut -d'.' -f1)

AVAIL_SPACE=$(df --output=avail "${BACKUP_DEST}" | tail -n 1)

if (( AVAIL_SPACE < REQ_SPACE )); then

REQ_HR=$(numfmt --to=iec --format="%.1f" "${REQ_SPACE}")

AVAIL_HR=$(df -h "${BACKUP_DEST}" | awk 'NR==2{print $4}')

handle_error "Insufficient Space. Needed: ~${REQ_HR}. Available: ${AVAIL_HR}."

fi

log_message "✅ Disk space OK."

# 2. The Backup

# -------------

log_message "📦 creating archive: ${BACKUP_FILENAME}..."

ionice -c 3 nice -n 19 tar \

--exclude-from="${EXCLUDE_FILE}" \

--one-file-system \

-cvzpf "${BACKUP_FILE_PATH}" \

"${SOURCE_DIR}"

if [[ $? -eq 0 ]]; then

SIZE=$(du -sh "${BACKUP_FILE_PATH}" | awk '{print $1}')

log_message "✅ Local backup created successfully (${SIZE})."

cleanup_old_backups

else

rm -f "${BACKUP_FILE_PATH}"

handle_error "Tar command failed. Temporary file removed."

fi

# 3. Offsite Transfer (Rsync)

# ---------------------------

if [[ "${ENABLE_OFFSITE}" == "true" ]]; then

log_message "☁️ Starting Rsync to ${REMOTE_HOST}..."

# The trailing slash on BACKUP_DEST/ is crucial!

# It syncs the CONTENTS of the folder, propagating deletions (mirroring).

rsync -avz --delete -e "ssh -i ${SSH_KEY_PATH} -o StrictHostKeyChecking=no" \

"${BACKUP_DEST}/" \

"${REMOTE_USER}@${REMOTE_HOST}:${REMOTE_DIR}"

if [[ $? -eq 0 ]]; then

log_message "✅ Offsite sync completed successfully."

else

log_message "⚠️ WARNING: Offsite sync failed. Local backup is safe, but check network/SSH."

# We do not exit with error here, because the local backup was a success.

fi

else

log_message "☁️ Offsite backup disabled in config. Skipping."

fi

log_message "🏁 Process Finished."

log_message "============================================================"

exit 0

🛠️ Installation Instructions

1. Install Dependencies

You need bc (for math calculations) and rsync (for the transfer):

sudo apt update

sudo apt install bc rsync

2. Create the Script

Open your favorite text editor:

sudo vim /usr/local/sbin/backup_home.sh

Paste the code from above into this file.

3. Edit Your Settings

Scroll to the CONFIGURATION section at the top of the script.

- Update

REMOTE_USER(e.g.,rootorjohn). - Update

REMOTE_HOST(e.g.,192.168.1.50ormyserver.com). - Update

SSH_KEY_PATH. If you are running this script as root (which you should), your key is likely at/root/.ssh/id_rsa.

4. Make it Executable

Give the script permission to run:

sudo chmod +x /usr/local/sbin/backup_home.sh

5. Create the Exclude File

Tell the backup what to ignore (Temporary files, Cache, Trash):

sudo vim /etc/backup_home_exclude.txt

Paste this recommended list:

*/.cache

*/.local/share/Trash

*/Downloads

*.tmp

*.log

6. Run a Test

Run it manually to watch the magic happen:

sudo /usr/local/sbin/backup_home.sh

⏰ Automate with Cron

Once your test run is successful, set it to run automatically every night at 3:00 AM.

- Open the root crontab:

sudo crontab -e - Paste this line at the bottom:

0 3 * * * /usr/local/sbin/backup_home.sh

You’re done! Your system will now back up locally, clean up old files, and sync the changes to your offsite server automatically.

Give this a read/rewrite and/or a test, and tell me what you think. This has actually been one of the first things I run when testing out a new AI tool, with and without context. Next time we will see what Claude will do for us.

Thanks!